Freie Universität: High-Precision, Small-Footprint Mapping

Researchers at Freie Universität Berlin are changing a storage paradigm for autonomous navigation. By using Ouster OS2 digital lidar to create ultra-lightweight bird's-eye-view maps, the team achieved sub-50cm localization accuracy with a map footprint of just 4 MB per square kilometer.

Building a map is a relatively trivial task for autonomous systems operating in simple environments. While GPS provides a rough estimate, autonomy requires centimeter-level precision. Typically, this is achieved by comparing real-time lidar data against a pre-existing high-definition (HD) 3D map.

Storing the raw 3D data required to map an entire metropolitan area can consume terabytes of storage, overwhelming the onboard computers of mobile robots and driving up operational costs. Furthermore, in environments like tunnels, parking garages, or expansive highway bridges—where satellite signals fail and vertical landmarks are sparse—traditional localization systems often lose their way.

To solve this, researchers at Freie Universität Berlin have developed GroundLoc, a lidar-only localization pipeline that proves you don't need a heavy map to achieve heavy-duty precision.

The Challenge: Memory Limits

The primary bottleneck for large-scale autonomy is data density. Traditional HD maps rely on massive 3D point clouds that can consume upwards of 55 GB of data for every square kilometer of road. For a fleet operating across thousands of miles, this memory wall makes map updates and real-time retrieval nearly impossible.

Beyond storage, there is a structural challenge. Most localization algorithms rely on vertical features—like walls, poles, and building facades—to lock a vehicle’s position. But the real world is messy. A bus parked in front of a wall or a highway bridge with no nearby buildings can leave a robot with no landmarks to reference. To achieve reliable, all-weather autonomy, we need a way to localize that is both data-efficient and resilient to the dynamic chaos of urban traffic.

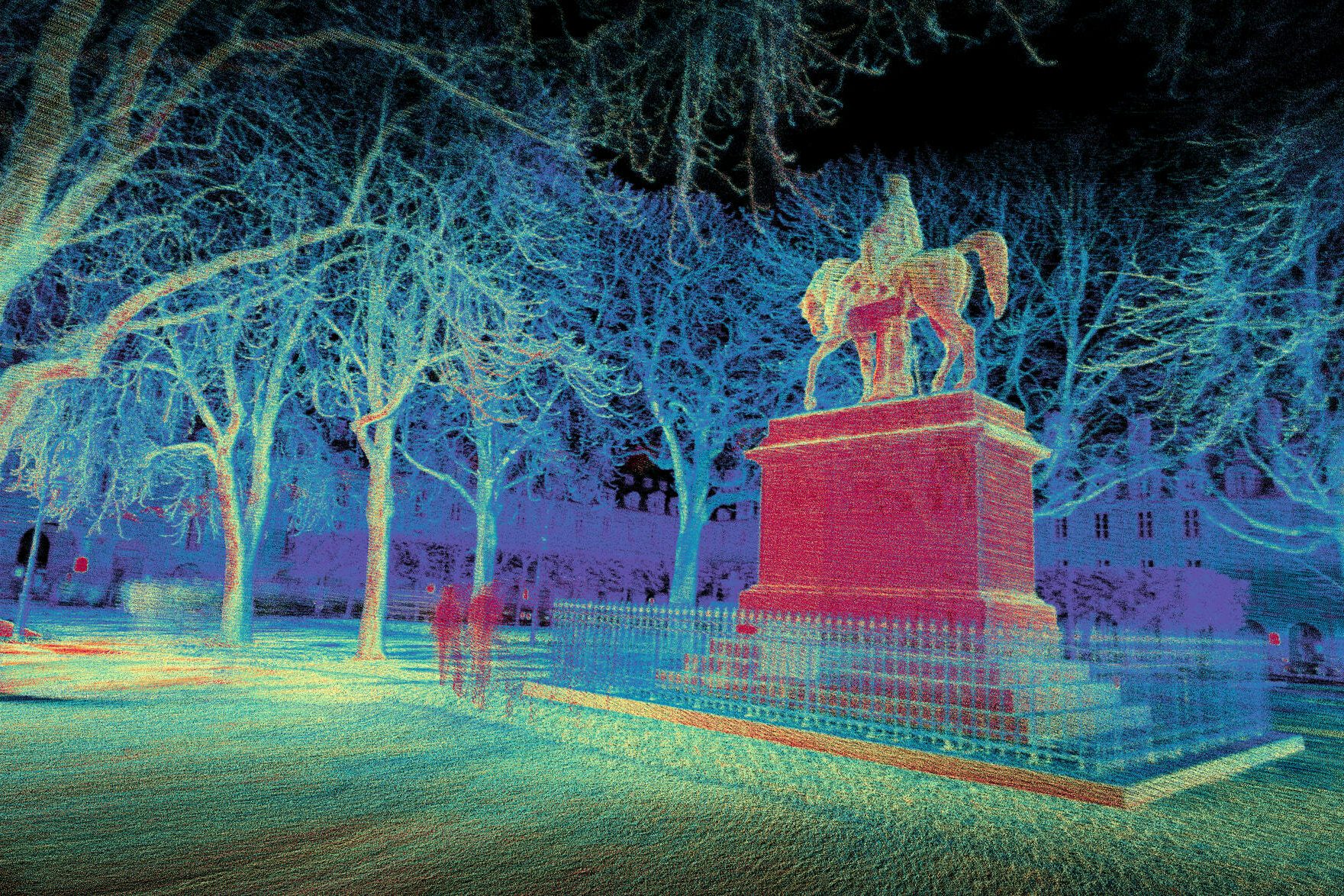

Running GroundLoc on HeLiPR Bridge sequence using the Ouster OS2-128

The Solution: Bird’s-Eye View

Researchers at the Dahlem Center for Machine Learning and Robotics (DCMLR) at Freie Universität Berlin developed GroundLoc to look at the ground, instead of the sky.

The pipeline projects Ouster lidar data into a highly compressed, 3-channel Bird’s-Eye View (BEV) representation. Think of it as a unique fingerprint for the pavement. The system focuses primarily on ground-level data, extracting three critical layers:

- Intensity: Capturing the high-contrast patterns of lane markings, pictograms, and asphalt transitions.

- Slope: Representing the local terrain gradient to identify curbs and inclines.

- Z-height Variance: Preserving the textural roughness and vertical "noise" of the ground plane.

By utilizing a learned keypoint network, GroundLoc can identify and match these unique ground features against a prior map with incredible precision. Because the ground is rarely blocked entirely (even in heavy traffic), the system is inherently resilient to the moving obstacles that typically confuse 3D perception stacks.

Digital Lidar in Action: Ouster OS2-128

The Ouster OS2-128 served as the primary benchmark sensor for the GroundLoc framework. The OS2’s 128 channels of digital data provided the high-resolution "ground signatures" necessary to distinguish subtle road features at high speeds.

The choice of a 360° digital architecture was critical. While the researchers evaluated several narrow-field-of-view and analog sensors, the Ouster OS2-128 consistently delivered the most reliable results. Its uniform data density allowed the GroundLoc pipeline to maintain a processing speed of over 14 Hz on standard consumer hardware, comfortably exceeding the requirements for real-time autonomous navigation.

GroundLoc (R2D2) localization results on the HeLiPR Town sequence using various sensors.

The Outcome: 13,000x More Efficient

The results of the GroundLoc study set a new standard for efficient, large-scale localization. Testing on the public HeLiPR and SemanticKITTI datasets demonstrated that Ouster’s hardware and GroundLoc are a formidable pair:

- 13,000x Storage Efficiency: HD maps were compressed to approximately 4.09 MB per square kilometer, down from the ~55 GB required for raw point cloud data.

- Unrivaled Precision: The system achieved an Average Trajectory Error (ATE) well below 50 cm across complex roundabout and highway bridge environments.

- Massive Reliability Gap: In multi-session matching (comparing new data to old maps), the Ouster-powered system achieved a 93.05% success rate, blowing narrow-FOV competitors out of the water.

Read their paper published to arXiv here.

Accelerate Your Research

We are proud to support the next generation of breakthroughs in autonomy and perception. University labs and research institutions can access exclusive, pre-approved pricing on our full suite of digital lidar sensors. Reach out to our team about your use case and we can work together to find an appropriate fit.